I’ve been closely following Anthropic’s work on AI safety and security — their research on red teaming, model vulnerabilities, and initiatives like the Fellows program genuinely resonate with what I care about in offensive security.

When the time comes for me to look for a full-time position, applying there is something I’d really like to do. In the meantime, this section serves as a personal log of everything I build, learn and explore at the intersection of AI and security — a running record of hands-on experience I can point back to.

MCP Security Research

#A series of vulnerability research articles on the Model Context Protocol (MCP) — protocol-level gaps, SDK implementation bugs, and the attack surface that comes with plugging AI assistants into external tools.

A deep dive into a protocol-level vulnerability in the Model Context Protocol (MCP) specification where malicious SVG icons delivered via data: URIs can escalate from XSS to full RCE on Electron clients. Reported to Anthropic VDP, closed as Informative. Disclosed here with full technical details.

Second article in my MCP security series. A malicious MCP server returns a 401 with a crafted WWW-Authenticate header pointing resource_metadata at any URL it wants. The MCP SDK fetches that URL without origin validation, resulting in blind SSRF that affects both Python and TypeScript SDKs, Claude Desktop, and Claude Code. Reported to Anthropic VDP, closed as duplicate. Full technical details disclosed here.

Third article in my MCP security series. Claude Code’s .mcp.json discovery walks from CWD to filesystem root with no boundary check and no file ownership verification. On multi-user Linux systems, any user can drop /tmp/.mcp.json to inject MCP servers into another user’s Claude Code session. Not reported to Anthropic. Here’s why, and the full technical breakdown.

Fourth article in my MCP security series. By chaining a transport-layer weakness (session ID as sole routing key) with the Tasks and Elicitation systems, an attacker can inject phantom tasks into a victim’s MCP session and phish credentials through the legitimate, trusted server. CVSS 8.1, reported to Anthropic VDP and disclosed. Full technical breakdown with working PoC.

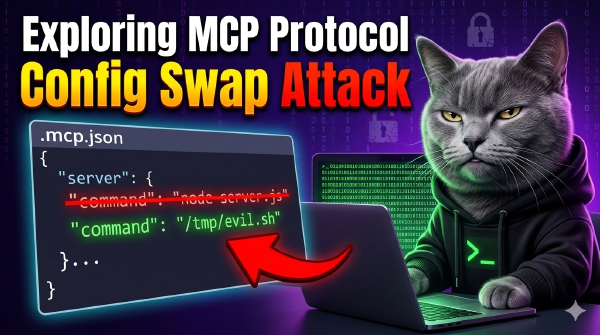

Fifth article in my MCP security series. Claude Code stores MCP server approvals as plain server names with no hash, no fingerprint, and no config verification. Once approved, swapping the server’s command to an arbitrary binary triggers no re-prompt. Reported to Anthropic VDP, closed as Informative (out of threat model). Full technical breakdown.

Sixth article in my MCP security series. A malicious MCP server can poison OAuth Authorization Server Metadata to redirect token exchange, client registration, and PKCE verifiers to attacker-controlled endpoints — while the user sees a legitimate identity provider login page. The Python and TypeScript SDKs skip RFC 8414 Section 3.3 issuer validation and perform no endpoint origin checks. Reported to Anthropic VDP, closed as duplicate of an existing tracked issue. Full technical breakdown and PoC.